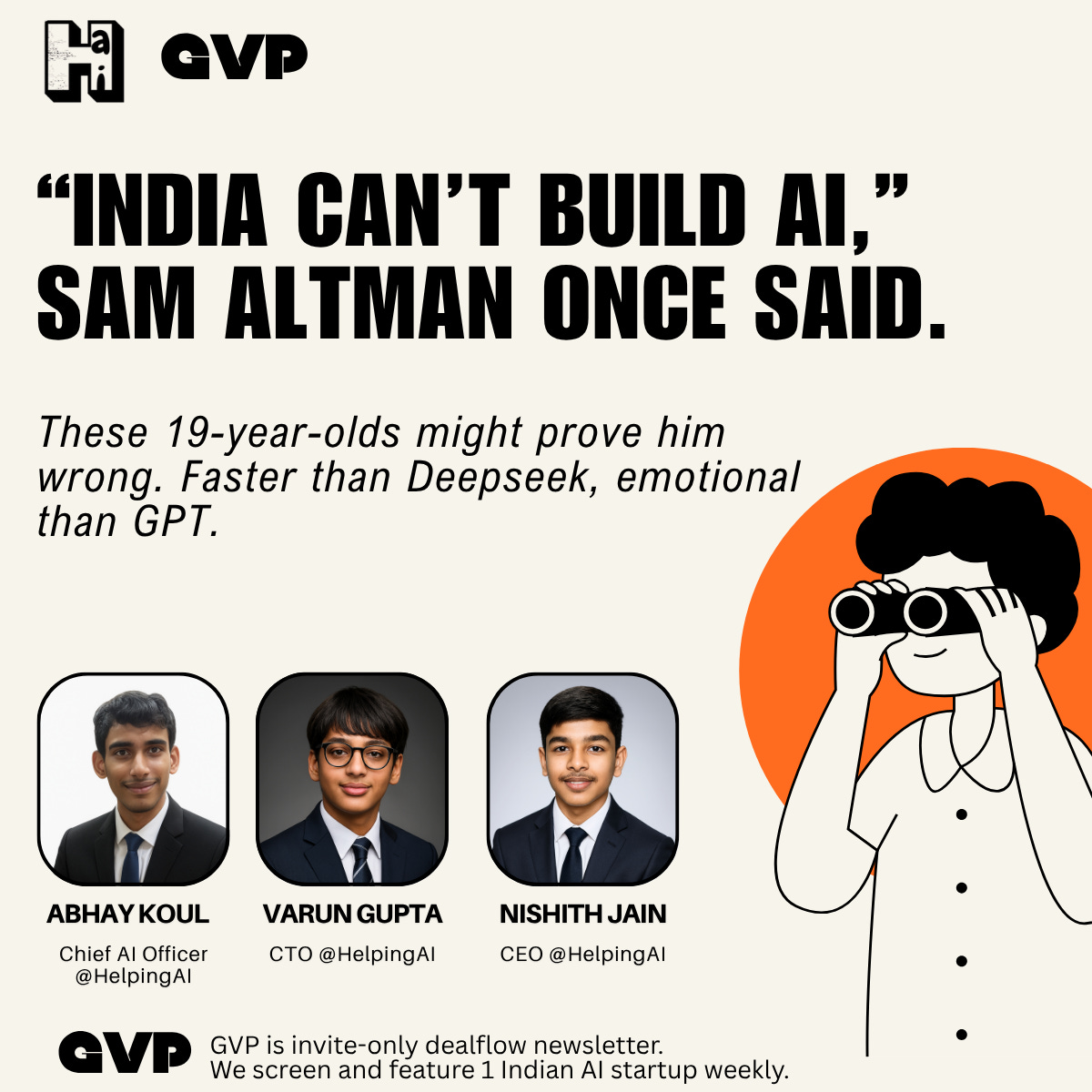

“India can’t build AI,” Sam Altman once said.

These 19-year-olds might prove him wrong.

OpenAI spent $700 million on compute last year.

Google DeepMind burned through billions.

Anthropic raised $4 billion just to keep Claude running.

And yet... three 19-year-old kids with a $200 GPU just made

The $700 Million Mistake Everyone's Making

Last month, I was talking to the CTO of a unicorn startup. Smart guy. MIT PhD. Raised $100M.

He told me something that blew my mind:

"We're spending $50,000 a month on OpenAI. But here's the crazy part—GPT-4 throws away 80% of its computation. It thinks about everything, even simple questions that need zero thinking."

"It's like hiring Einstein to do your grocery shopping. Expensive and completely unnecessary."

That's when it hit me.

The entire AI industry has been optimizing for the wrong thing.

They're building models that think as hard as possible about everything. Maximum compute, maximum cost, maximum complexity.

But what if the breakthrough isn't thinking harder?

What if it's thinking smarter?

Then all look stupid.

The 19-Year-Old Who Broke Billion-Dollar Logic

Three Indian teenagers Abhay, Varun, and Nishith were trying to use AI for their school projects. But they faced one problem:

GPT-4: Too costly.

DeepSeek: Too slow.

Open-source: Too basic.

So they did what teenagers do when adults fail them. They built their own.

But here's where it gets interesting.

Instead of copying Silicon Valley's "think harder" approach, they asked a different question:

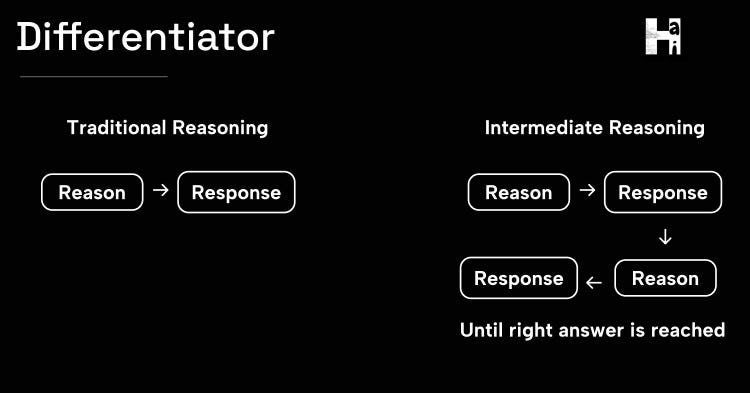

"What if AI could think differently at different moments?"

Simple questions get simple thinking. Complex problems get deep reasoning. And mid-conversation? The AI can literally change its mind and course-correct.

They called it intermediate reasoning. And it changes everything.

What Dhanishtha-2.0 does that no billion-dollar model can?

It thinks twice. But only when it needs to.

Normal AI: [Thinking intensely for 30 seconds] "The capital of France is Paris."

Dhanishtha-2.0: [0.2 seconds] "The capital of France is Paris."

Normal AI: [Thinking intensely for 30 seconds] "Here's a basic Python function..."

Dhanishtha-2.0: [0.2 seconds] "Here's a basic Python function..."

But ask something complex?

Normal AI: [Thinks once, gives mediocre answer]

Dhanishtha-2.0: [Thinks → Responds → Realizes it can do better → Thinks again → Improves answer in real-time]

The result?

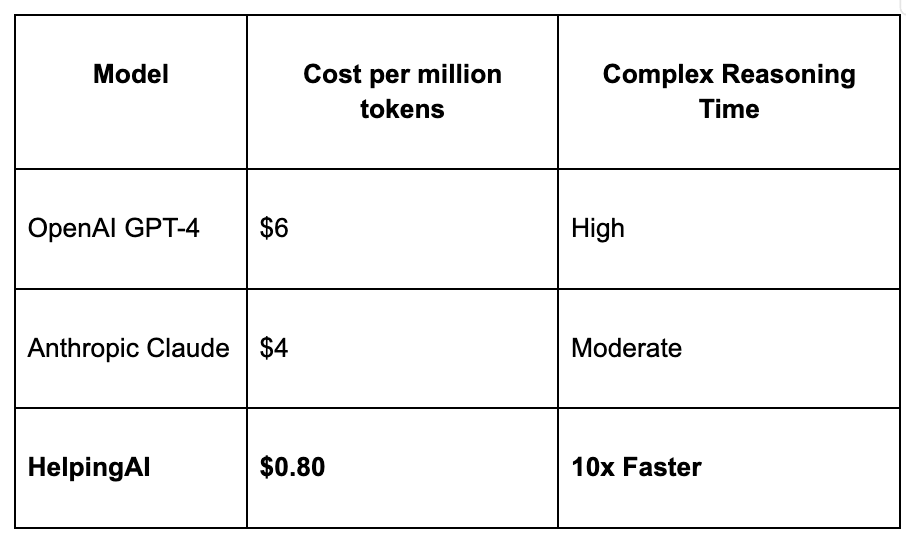

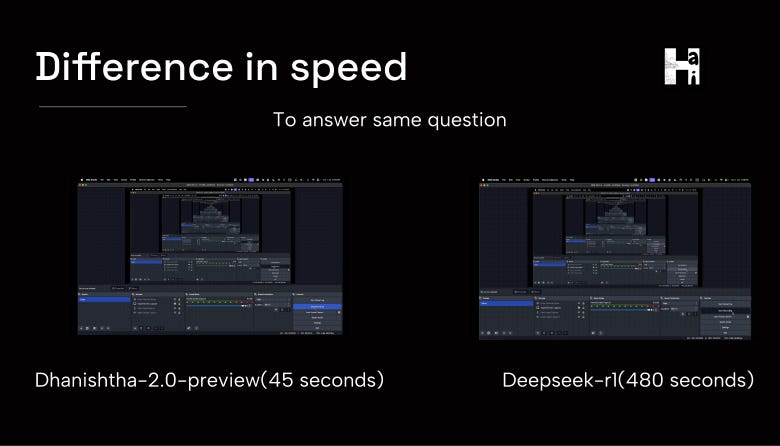

10x faster than "reasoning" models like DeepSeek-R1

5x fewer tokens than GPT-4

More accurate answers because it self-corrects mid-response

Why Big Tech Should Be Worried

What it means for AI startups:

10x faster reasoning speed than DeepSeek-R1

5x fewer tokens per query

87% cost savings vs. leading reasoning models

Makes AI agents 5x more efficient

Meet the Founders

Chief AI Officer: AI prodigy, recognized by HuggingFace and Stanford professors, currently pursuing Data Science at IIT Madras.

CCEO: Moderator of India’s largest AI community (r/ai_india), experienced AI practitioner.

CTO: Scaled a tech agency to six figures in 3 months, expertise in API-driven products.

GVP Take

I've seen this movie before.

AWS vs. traditional hosting: "Why pay $10,000/month for a server when you can pay $100?"

Stripe vs. traditional payments: "Why take 3 weeks to integrate payments when you can do it in 3 lines of code?"

Figma vs. Adobe: "Why pay $600/year for software when you can use better tools for free?"

Now it's happening in AI.

HelpingAI vs. OpenAI: "Why pay $6 per million tokens for slow, robotic responses when you can get faster, more human AI for $0.80?"

The pattern is always the same:

Incumbents optimize for enterprise customers with unlimited budgets

Scrappy upstarts optimize for everyone else

"Everyone else" turns out to be 90% of the market

Game over.

Proven traction & full pitch deck download